Let me start with conclusion – it’s amazing technology that is bit too complicated for an average admin.

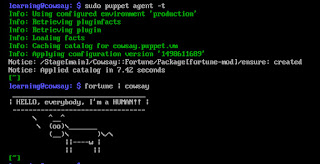

Let me start with conclusion – it’s amazing technology that is bit too complicated for an average admin.Puppet Learning VM covers most of the concepts and how those concepts are related to each other. Everything is built in form of configuration files. You start with one simple application and move towards its customization, extension and orchestration.

To grasp the concepts, I also signed for Self-Placed (FREE) training modules that Puppet published on their web-site The only annoying thing – you get multiple emails when you register for a learning unit. An average unit is about 10 minutes of information and there is about dozen of them, so you get 30+ emails when going through the videos. I find it a bit excessive. (maybe my personality issue)

To summarize:

- Building blocks (called Resources) are Files, Packages and Services

- The blocks are organized in Classes to be managed together

- Facter is the service that collects current state of the end-system with all possible details (not necessary changeable by puppet – i.e. OS version, MAC address etc.)

- Most of the Modules, Services and <anifests are not part of Puppet and written by community and repository called Forge

- Additionally, Roles and Profiles are used to categorize Resources and End-user systems even more to make Puppet implementation scalable

Most of learning VM Modules are written well. I had a bit of challenge with ‘quest’ – a program that supposed to monitor students’ progress (I’m guessing it also prepares your learning environment for specific exercises). Sometimes quest didn’t recognize completed tasks or got confused with sequential tasks i.e. Task 3 changes file that was already changed in Task 1, making Task free completed and Task 1 not completed. Also, it seems that “Defined Resource Types” quest doesn’t really have steps outlined and more just one monolithic piece of text.

As a last thing around Puppet Learning VM – the troubleshooting chapter is very helpful and allowed me to resolve most of the items that I faced.

To summarize – if you need to manage and standardize your environment – Definitely Puppet is the tool that needs to be looked at. I must disagree with the statement from Packet Pushers Episode “Datanauts 093: Erasure Coding And Distributed Storage” where Puppet is mentioned as not advanced solution. Puppet can do a lot and deserves to be in demand.